Every enterprise data platform makes a fundamental choice about how it stores data. For decades, that choice was dictated by the vendor: Teradata stored data in its proprietary format, Oracle in its tablespaces, SAS in its .sas7bdat datasets, and Informatica in its persistent caches. The data was inseparable from the platform that created it. Switching vendors meant migrating every byte, rewriting every query, and retraining every team. The switching cost was the business model.

Apache Iceberg represents a structural break from this pattern. It is an open table format that stores data in standard Parquet files, described by open metadata, accessible from any compatible engine. And enterprises are adopting it at a pace that suggests the era of proprietary data formats is ending.

The Cost of Vendor Lock-In

Proprietary data formats create dependencies that compound over time. A SAS dataset cannot be read by Spark without a conversion tool. A Teradata table cannot be queried from Trino without extraction and reloading. An Informatica persistent cache is accessible only through Informatica. These format dependencies extend well beyond storage: they lock in compute engines, development tools, operational procedures, and human expertise.

The financial cost is substantial and predictable. Vendor license fees escalate annually, typically 5-15% per year, because the switching cost ensures customers will pay rather than migrate. A large enterprise running SAS, Teradata, and Informatica might spend $10-30 million per year on license fees alone, before accounting for infrastructure, support contracts, and the opportunity cost of being unable to adopt modern tools.

But the hidden costs are often larger. When a data science team cannot access data without going through a SAS or Informatica pipeline, innovation slows. When a streaming use case requires data that is locked in a Teradata table, architects build complex ETL bridges instead of direct access patterns. When a cloud migration is blocked because the on-premise data format has no cloud-native equivalent, the entire modernization program stalls.

The data itself becomes a hostage to the platform that wrote it. And the ransom increases every year.

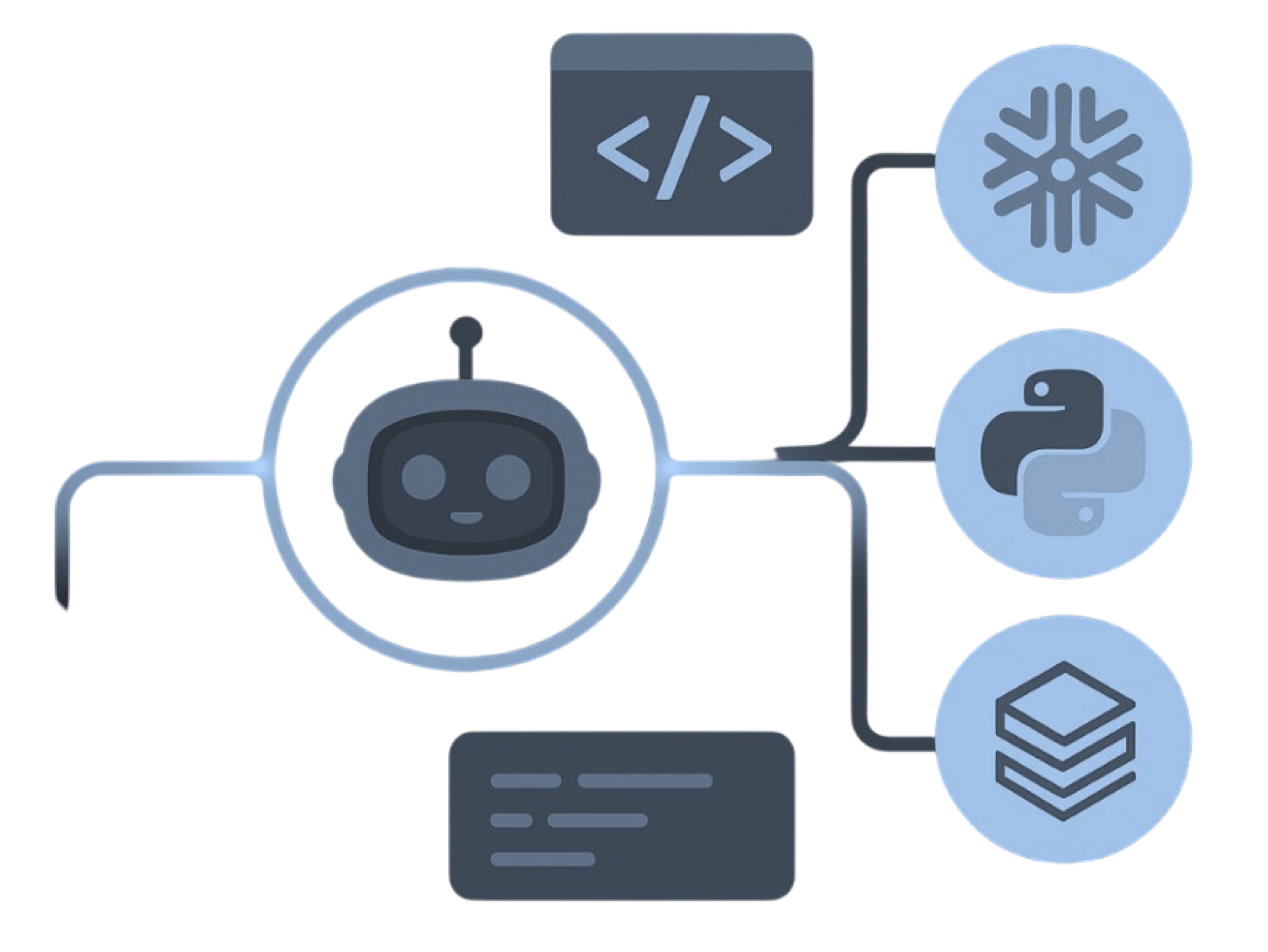

Apache Iceberg — enterprise migration powered by MigryX

Iceberg vs. Delta Lake vs. Hudi

Iceberg is not the only open table format. Delta Lake (created by Databricks) and Apache Hudi (created at Uber) offer overlapping capabilities. Understanding the differences helps enterprises make an informed choice.

| Dimension | Apache Iceberg | Delta Lake | Apache Hudi |

|---|---|---|---|

| Origin | Netflix, Apache-governed | Databricks, Linux Foundation | Uber, Apache-governed |

| Governance | Apache Software Foundation | Linux Foundation (Delta Lake project) | Apache Software Foundation |

| Primary Engine | Multi-engine by design | Spark-optimized, growing multi-engine | Spark/Flink, smaller ecosystem |

| Hidden Partitioning | Yes (first-class feature) | No (uses Hive-style partitions) | No (uses Hive-style partitions) |

| Schema Evolution | Full (add, drop, rename, reorder) | Add and rename columns | Full (add, drop, rename) |

| Time Travel | Yes (snapshot-based) | Yes (transaction log-based) | Yes (timeline-based) |

| ACID Transactions | Yes (optimistic concurrency) | Yes (optimistic concurrency) | Yes (MVCC) |

| Partition Evolution | Yes (metadata-only) | No (requires rewrite) | No (requires rewrite) |

| Multi-Engine Support | Spark, Trino, Flink, Snowflake, Athena, Dremio, BigQuery, StarRocks | Spark, Trino (via connectors), Flink (limited), Snowflake (via UniForm) | Spark, Flink, Trino (limited) |

| Streaming Support | Flink integration, incremental reads | Structured Streaming (Spark-native) | Strong (designed for streaming) |

| Catalog Standard | REST Catalog specification | Unity Catalog, Delta Sharing | No standard catalog spec |

All three formats are open source and store data in Parquet files. The critical differentiators are multi-engine support and governance. Iceberg's REST catalog specification and broad engine compatibility make it the most vendor-neutral choice. Delta Lake's deep integration with Databricks makes it the natural choice for all-Databricks shops. Hudi's streaming-first design appeals to organizations with heavy streaming workloads on Flink.

The market trajectory is clear. Iceberg's multi-engine support has driven the broadest adoption across cloud providers and compute engines. Snowflake, Databricks (via UniForm), AWS, Google Cloud, and dozens of independent engines have all invested in first-class Iceberg support. This breadth of adoption creates a self-reinforcing cycle: more engines support Iceberg, which drives more adoption, which drives more engine support.

MigryX: Idiomatic Code, Not Line-by-Line Translation

The difference between MigryX and manual migration is not just speed — it is code quality. MigryX generates idiomatic, platform-optimized code that leverages native features of your target platform. A SAS DATA step does not become a clunky row-by-row loop — it becomes a clean, vectorized DataFrame operation. A PROC SQL query does not become a literal translation — it becomes an optimized query that takes advantage of your platform’s pushdown capabilities.

Open Governance & the REST Catalog

An open data format is necessary but not sufficient for true vendor independence. If the metadata catalog is proprietary, the lock-in simply shifts from the storage layer to the metadata layer. Iceberg addresses this with the REST catalog specification.

The Iceberg REST catalog defines a standard HTTP API for creating, altering, dropping, and listing Iceberg tables. Any catalog service that implements this specification can manage Iceberg tables, and any engine that speaks the REST catalog protocol can discover and access those tables. This means your table metadata is as portable as your table data.

The ecosystem of catalog implementations is growing rapidly:

- AWS Glue Data Catalog: Amazon's managed metadata catalog supports Iceberg natively. Tables registered in Glue are accessible from Athena, EMR, Redshift Spectrum, and any engine that reads the Glue catalog.

- Nessie: A Git-like catalog that enables branching, tagging, and merging of table metadata. Developers can create isolated branches for experimentation without affecting production tables.

- Polaris: Snowflake's open-source Iceberg catalog, implementing the REST specification. Tables managed by Polaris are accessible from Snowflake and any REST-catalog-compatible engine.

- Tabular: A managed Iceberg catalog service (now part of Databricks) that provides SaaS catalog management with role-based access control, automated maintenance, and usage analytics.

- Unity Catalog: Databricks' unified governance layer supports Iceberg tables alongside Delta tables, enabling organizations to manage both formats through a single interface.

The practical implication is that organizations can choose their catalog based on operational requirements (managed vs. self-hosted, cloud provider alignment, governance features) without creating a new lock-in point. If you start with Glue and later decide to move to Polaris, the migration is a metadata operation, not a data migration.

MigryX precision parser — Deep AST-level analysis ensures every construct is understood before conversion begins

Platform-Specific Optimization by MigryX

MigryX maintains deep knowledge of every target platform’s strengths and best practices. When converting to Snowflake, it leverages Snowpark and native SQL functions. When targeting Databricks, it uses PySpark DataFrame operations optimized for distributed execution. When generating dbt models, it follows dbt best practices for modularity and testability. This platform awareness is what makes MigryX output production-ready from day one.

Enterprise Adoption Patterns

Enterprises are adopting Iceberg through three primary patterns, each addressing a different starting point and set of constraints.

Pattern 1: On-Premise Data Warehouse to Cloud Iceberg

Organizations running Teradata, Oracle Exadata, Netezza, or other on-premise data warehouses migrate to Iceberg tables on cloud object storage (S3, ADLS, GCS). The proprietary database is replaced by open Parquet files described by Iceberg metadata, queried by Spark, Trino, or Snowflake. This pattern eliminates the largest license costs and enables elastic compute scaling. A Teradata system that costs $5 million per year in licensing and $2 million in hardware maintenance is replaced by Iceberg on S3 with pay-per-query compute that typically costs 60-80% less at equivalent workload levels.

Pattern 2: Consolidate Multiple ETL Tools onto Iceberg

Large enterprises often run multiple ETL platforms in parallel: SAS for statistical modeling, Informatica for data integration, DataStage for high-volume processing, and custom Python scripts for newer workloads. Each tool writes to its own target format. Consolidating all ETL output onto Iceberg creates a unified data layer that any downstream consumer can access. This pattern eliminates redundant data copies, simplifies governance, and reduces the total number of platforms the team must support.

Pattern 3: Add Real-Time Capability

Organizations with batch-oriented Hive tables add streaming capability by migrating to Iceberg. Apache Flink writes streaming data directly to Iceberg tables, while Spark continues to handle batch processing. Both operate on the same table, with Iceberg's ACID transactions ensuring consistency. This pattern enables near-real-time analytics without replacing the existing batch infrastructure. The same table that receives streaming updates from Flink is queryable by Trino for interactive dashboards and by Spark for batch reporting.

One Format, Every Engine

Iceberg's open specification means your data works with Spark, Trino, Flink, Snowflake, Databricks, Athena, and BigQuery. No copies. No conversions. No lock-in. Your data is truly yours.

The Migration Path with MigryX

For organizations ready to move from proprietary formats to Iceberg, the migration path through MigryX is systematic and automated.

MigryX begins by parsing legacy code from any supported source platform: SAS programs, Informatica mappings, DataStage jobs, Talend workflows, or SSIS packages. The parser understands the full semantics of each platform, not just the syntax. It captures transformation logic, data flow, schema definitions, and execution dependencies.

The conversion engine then generates PySpark code that writes to Iceberg tables natively. This is not a generic translation. The generated code includes Iceberg-specific table properties (compression codec, write format, sort order), partition specifications recommended by Merlin AI based on data profiling, and catalog registration statements for the target catalog service.

Lineage is preserved throughout the conversion. Every column in the Iceberg output table can be traced back through the transformation logic to its original source column in the legacy platform. This lineage metadata is registered in the Iceberg catalog as table properties and is available to downstream governance tools.

Validation is automated. MigryX generates comparison queries that check row counts, aggregate values, schema conformance, and sample-level cell-by-cell accuracy between legacy output and Iceberg table contents. Validation reports are produced in both machine-readable (JSON) and human-readable (HTML) formats.

The result is an auditable, repeatable migration process that transforms a multi-year manual effort into a structured program with clear milestones, measurable quality gates, and traceable lineage from legacy source to Iceberg target.

The question is no longer whether to adopt open table formats. The question is how quickly you can get there without breaking what already works. MigryX answers that question with automated conversion, AI-driven optimization, and comprehensive validation.

Why MigryX Delivers Superior Migration Results

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Production-ready output: MigryX generates code that passes code review and runs in production — not prototype-quality output that needs weeks of cleanup.

- Platform optimization: Converted code leverages target platform-specific features for maximum performance and cost efficiency.

- 25+ source technologies: Whether migrating from SAS, Informatica, DataStage, SSIS, or any of 25+ legacy technologies, MigryX handles it.

- Automated documentation: Every conversion decision is documented with before/after code mappings and transformation rationale.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to break free from vendor lock-in?

See how MigryX automates migration from proprietary platforms to open Iceberg tables.

Schedule a Demo